The term "AI supercomputer price" refers to the cost of high-performance computing (HPC) systems specifically designed for artificial intelligence workloads, including machine learning (ML) training, deep learning (DL), and complex data analytics. Unlike standard desktop PCs, these systems are built around powerful, multi-core processors, high-bandwidth memory, and often incorporate specialized hardware like GPUs or AI accelerators to handle parallel processing tasks efficiently. Pricing is not a single figure but a wide spectrum, heavily dependent on the specific computational power, memory capacity, storage speed, and specialized components required for the intended AI model scale.

Key specifications that define an AI computing system's capability and influence its price include:

-

Processor (CPU): High-core-count CPUs (e.g., Intel Core i5/i7/i9, Xeon) are essential for data preprocessing and managing complex workflows.

-

Accelerator (GPU/AI Chip): Dedicated GPUs from NVIDIA (e.g., RTX, A-series, H-series) or other AI accelerators are critical for the parallel matrix operations fundamental to neural network training.

-

Main Memory (RAM): Large RAM capacities (32GB, 64GB, or more) are necessary to hold massive datasets in memory for rapid processing.

-

Storage: High-speed NVMe SSDs (512GB, 1TB, or larger) are required for fast data loading and model checkpointing.

-

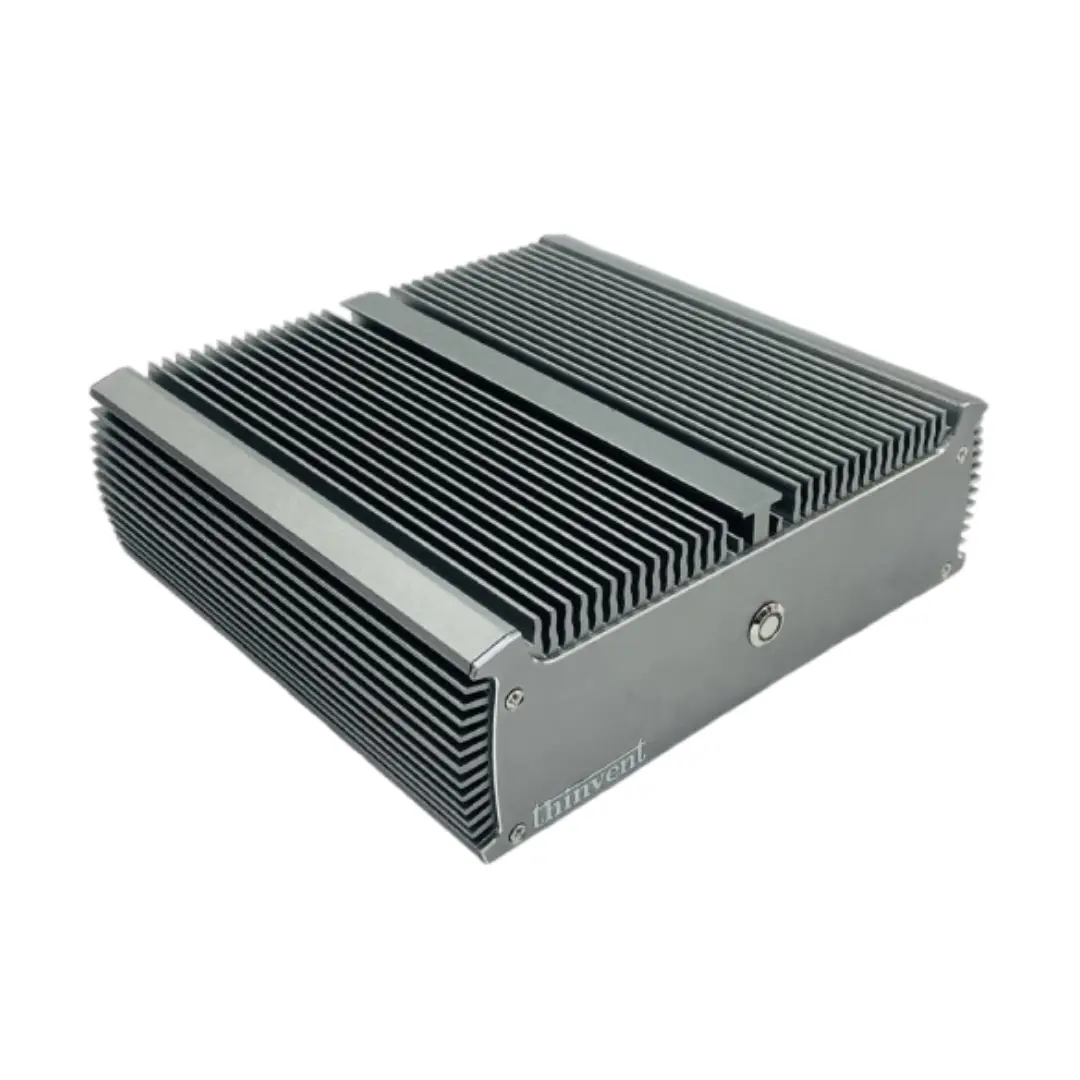

Cooling & Power: Robust thermal solutions (often fanless or with advanced active cooling) and high-wattage power supplies are needed to maintain stability under sustained heavy loads.

These systems are deployed across various demanding applications:

-

Edge AI & Inference: Running trained AI models in real-time on factory floors, in retail analytics, or for autonomous vehicle subsystems.

-

Research & Development: Training smaller-scale machine learning models in academic or corporate R&D environments.

-

Prototyping & Development: Serving as a development workstation for AI software before scaling to larger data center clusters.

| System Tier | Typical Use Case | Key Components | Relative Cost Range |

|---|---|---|---|

| Edge AI / Inference | Real-time analytics, smart kiosks, quality inspection | Intel Core i5/i7, 16-32GB RAM, integrated/mid-range GPU | Entry to Mid |

| AI Workstation | Model development, training small-to-medium models | High-core CPU (i7/i9), 32-64GB+ RAM, high-end GPU (RTX 4080/4090) | Mid to High |

| AI Server / Cluster Node | Large-scale model training, data center deployment | Server-grade CPUs (Xeon), 64GB+ RAM, multiple professional GPUs (A100/H100) | Premium |

Thinvent's AI-Ready Computing Solutions

Thinvent offers a range of robust, industrial-grade computing platforms that serve as the foundation for edge AI and inference applications. Our systems are engineered for reliability in challenging environments, featuring fanless designs for silent operation and dust resistance. For AI workloads, we provide configurations with powerful Intel Core processors (including i3, i5, and i7 series from 12th to 14th Generations), ample DDR4 RAM up to 64GB, and fast NVMe SSD storage. These compact Mini PCs and Industrial PCs are ideal for deploying trained AI models at the edge, performing real-time computer vision, predictive maintenance analytics, and intelligent automation. By combining computational power with industrial durability, Thinvent delivers cost-effective and reliable building blocks for your AI infrastructure.