Running Large Language Models (LLMs) locally requires a specific balance of computational power, memory, and storage. The "best PC" for this task is not a one-size-fits-all solution but depends on the model size, desired inference speed, and budget. For effective local LLM deployment, focus on three core components: a modern multi-core CPU with high clock speeds, ample RAM (especially for model loading), and fast NVMe SSD storage for quick model loading and data processing.

Key Specifications for Local LLM PCs

The primary bottleneck for local LLMs is often memory (RAM). Models are loaded entirely into RAM, so capacity is critical. For smaller 7B-13B parameter models, 16GB is a practical minimum, while 32GB or more is recommended for larger 13B+ models. Processor performance is equally vital; look for recent Intel Core i5/i7 or equivalent processors with high core/thread counts (e.g., 10+ cores/12+ threads) and turbo frequencies above 4.0 GHz to handle the intense computational load of token generation. Fast PCIe NVMe SSDs (512GB+) significantly reduce model load times.

Use Cases and Applications

Local LLM deployment is ideal for developers, researchers, and businesses requiring data privacy, offline access, or customized model fine-tuning. Applications include:

-

AI-Powered Development: Running code-generation models locally within an IDE.

-

Private Data Analysis: Processing sensitive documents or internal data without cloud transmission.

-

Research & Experimentation: Fine-tuning open-source models on proprietary datasets.

-

Edge AI Solutions: Deploying conversational AI or content-generation tools in secure or remote environments.

Recommended System Tiers

| Use Case / Model Size | Recommended CPU (Intel) | Minimum RAM | Recommended RAM | Storage |

|---|---|---|---|---|

| Lightweight / 7B Params | Core i3-1215U / i5-1240P | 8 GB | 16 GB | 256 GB NVMe |

| Balanced / 13B Params | Core i5-1240P / i5-1250P | 16 GB | 32 GB | 512 GB NVMe |

| High-Performance / 13B+ Params | Core i5-1335U / i5-1340P | 32 GB | 64 GB | 1 TB NVMe |

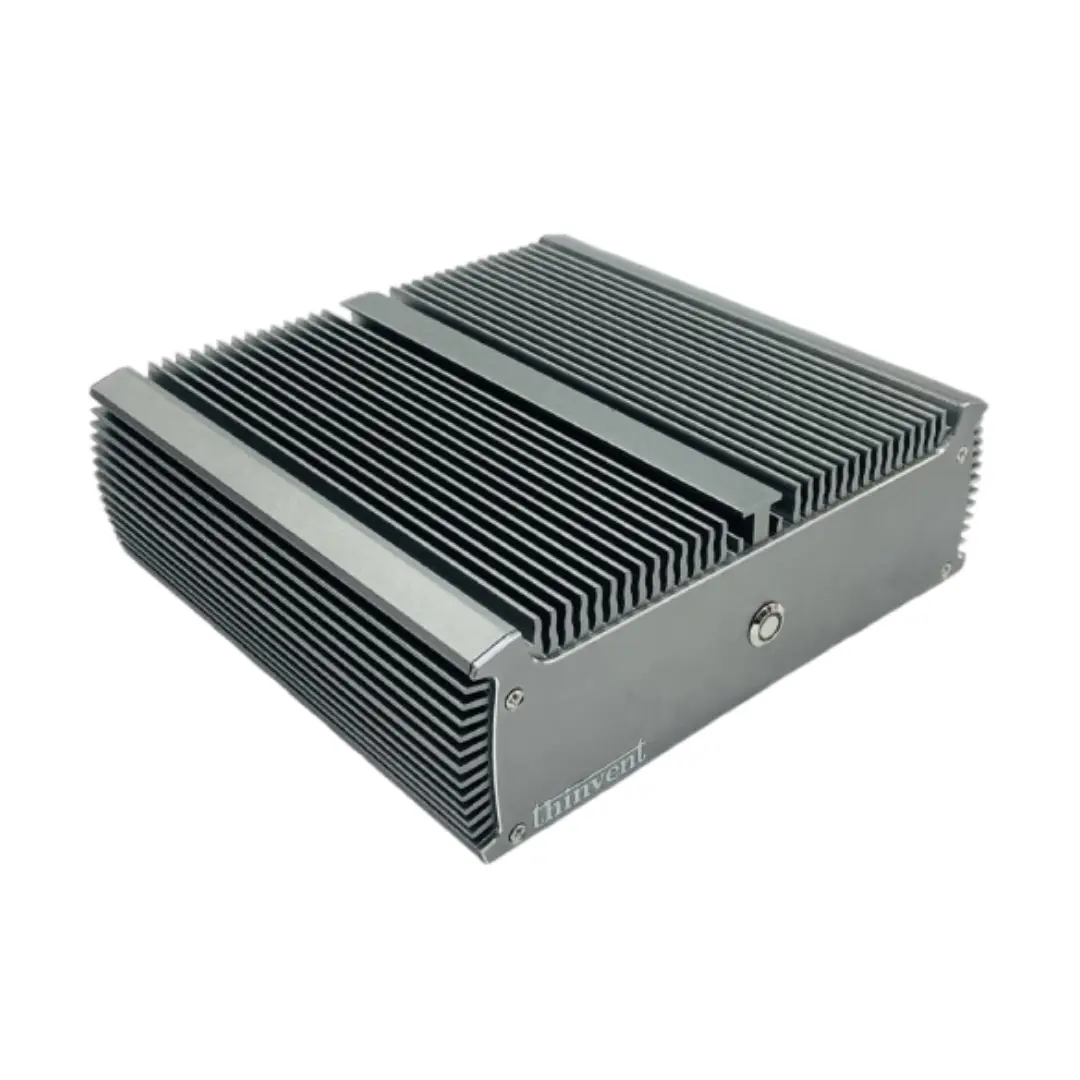

Thinvent PCs for Local LLM Deployment

Thinvent's industrial and mini PC lineup offers robust, reliable platforms perfectly suited for local AI workloads. For demanding LLM inference, the Industrial PC IPC5 with a 12-core Intel Core i5-1240P processor, 16GB RAM, and 512GB SSD provides an excellent balance of performance and expandability. For users needing maximum performance in a compact form factor, systems featuring 14th Gen Intel Core processors, like the Aero Mini PC with an Intel Core 5 120U (10 cores, up to 5.0 GHz), 16GB RAM, and 512GB SSD, deliver the high single-threaded and multi-threaded performance crucial for fast token generation. These fanless or actively cooled systems ensure stable operation under sustained loads, making them ideal for dedicated AI workstations.