What is the Best PC to Run an LLM?

The best PC for running a Large Language Model (LLM) locally is a high-performance workstation with a powerful multi-core processor, substantial RAM, and fast SSD storage. Unlike standard office PCs, LLMs require significant computational resources for both initial model loading and subsequent inference (generating responses). The core requirements are a modern, high-core-count CPU (Intel Core i5/i7 or equivalent), a minimum of 16GB of RAM (with 32GB or more being ideal for larger models), and a fast NVMe SSD for quick model loading and data swapping.

Key Technical Specifications

For effective local LLM operation, focus on these hardware components:

-

Processor (CPU): A modern, multi-core processor is essential. Intel Core i5, i7, or i9 series from the 12th generation or newer are recommended. More cores allow for better parallel processing of model layers.

-

Memory (RAM): System RAM is critical as the entire model must be loaded into memory. For 7B parameter models, 16GB is a functional minimum. For 13B+ parameter models, 32GB or 64GB is strongly advised to prevent slowdowns.

-

Storage (SSD): A fast NVMe PCIe SSD (512GB or larger) drastically reduces model load times and improves overall system responsiveness when handling large datasets.

-

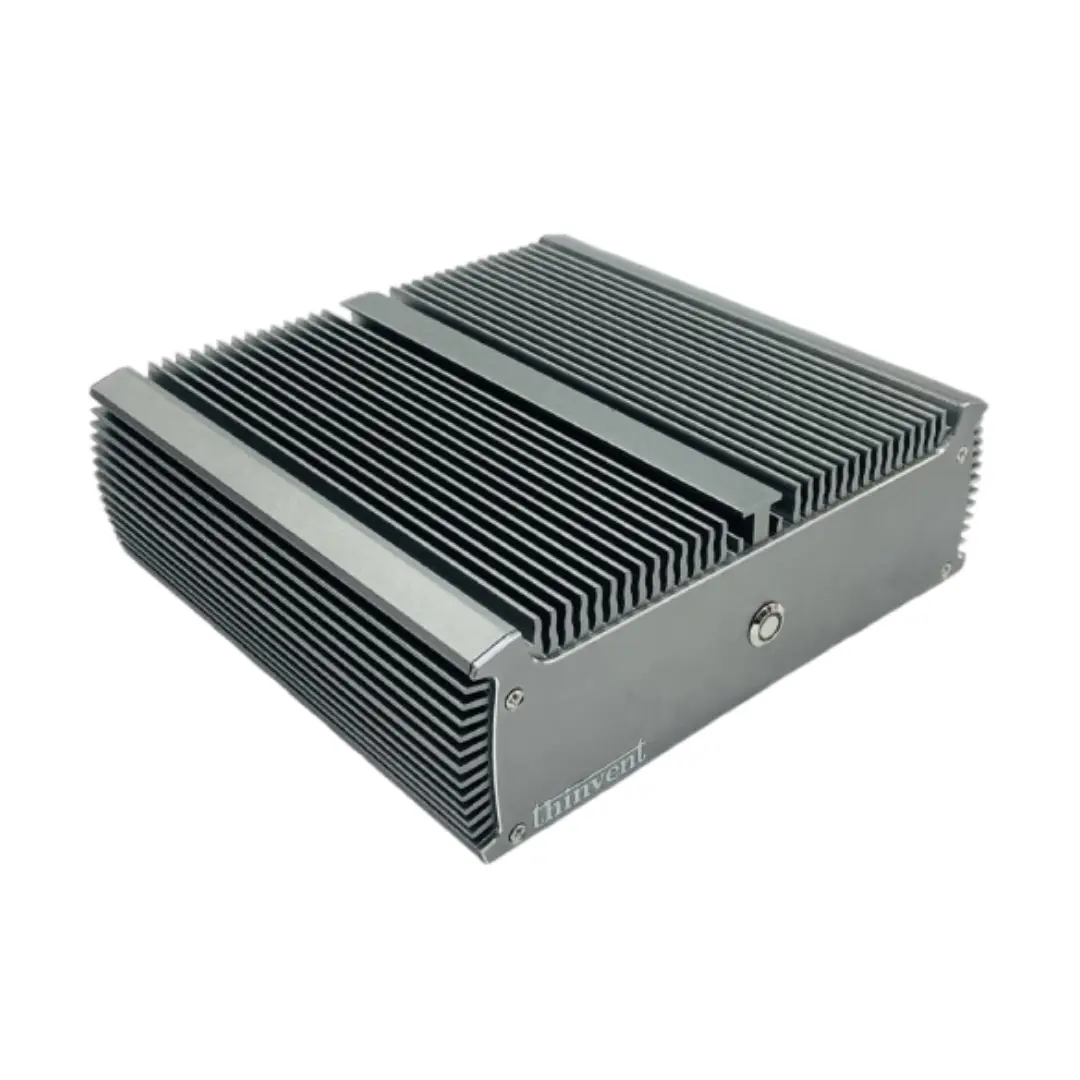

Form Factor: While performance is key, industrial-grade Mini PCs or compact workstations offer a robust, fanless solution for 24/7 operation in environments like research labs, digital signage, or edge AI deployments.

Use Cases and Applications

Running LLMs on a local PC is ideal for scenarios requiring data privacy, low-latency responses, or offline operation.

-

Research & Development: Prototyping AI applications, fine-tuning models on proprietary datasets.

-

Edge AI & IoT: Deploying intelligent chatbots or analysis tools in remote locations without cloud dependency.

-

Content Creation & Automation: Generating drafts, translations, or code in a secure, controlled environment.

-

Educational Purposes: Learning about AI model architecture and inference without relying on cloud API costs.

Recommended System Comparison

| Use Case | Recommended CPU Series | Minimum RAM | Ideal Storage | Notes |

|---|---|---|---|---|

| Lightweight/7B Models | Intel Core i5 / N-series | 16 GB | 512 GB SSD | Good for experimentation and smaller models. |

| Mainstream/13B Models | Intel Core i5 / i7 | 32 GB | 1 TB NVMe SSD | Balanced performance for most development work. |

| Heavy/70B+ Models | Intel Core i7 / i9 | 64 GB+ | 2 TB+ NVMe SSD | Requires high-end workstation components. |

Thinvent PCs for LLM Workloads

Thinvent offers a range of industrial computers perfectly suited for local LLM deployment. Our systems prioritize reliability, sustained performance, and fanless cooling for silent, maintenance-free operation in demanding settings. For LLM tasks, we recommend exploring our high-performance Mini PC and Industrial PC lines equipped with the latest Intel Core processors (i3, i5, i7), configurable with up to 64GB of RAM and high-speed NVMe storage. These rugged machines provide the computational power needed for AI inference while ensuring long-term stability for continuous operation.