A GPU server is a specialized computing system designed to leverage the parallel processing power of multiple Graphics Processing Units (GPUs) for demanding computational tasks beyond traditional graphics rendering. Unlike standard servers with powerful CPUs, these systems are engineered with robust power supplies, advanced cooling solutions, and high-bandwidth interconnects (like PCIe Gen 4/5) to support multiple high-end GPUs. They are the cornerstone of modern high-performance computing (HPC), artificial intelligence (AI), machine learning (ML), deep learning, and complex scientific simulations.

The core specifications that define a GPU server's capability include the number and model of GPUs (e.g., NVIDIA H100, A100, L40S), the supporting CPU platform (typically high-core-count Intel Xeon or AMD EPYC processors), vast amounts of high-speed RAM (often 256GB to 1TB+), and ultra-fast NVMe storage arrays. These components work in tandem to handle massive datasets and complex algorithms. Key applications include training large language models (LLMs), real-time inference, autonomous vehicle development, financial modeling, genomic sequencing, and rendering for visual effects.

When comparing GPU servers, several factors are critical:

| Feature | Importance for AI/Compute Workloads |

|---|---|

| GPU Count & Model | Determines raw parallel processing power (TFLOPS) and memory bandwidth for model training. |

| GPU Interconnect (NVLink/NVSwitch) | Enables high-speed communication between GPUs, crucial for scaling performance across multiple cards. |

| System Memory (RAM) | Must be sufficient to hold large datasets and model parameters in memory for efficient processing. |

| Storage I/O | High-throughput NVMe SSDs in RAID configurations prevent data bottlenecks during training. |

| Network Connectivity | High-speed Ethernet (25/100GbE) or InfiniBand is essential for multi-server cluster communication. |

| Cooling & Power | Robust thermal design and high-wattage PSUs (1600W+) are required to sustain GPU performance under full load. |

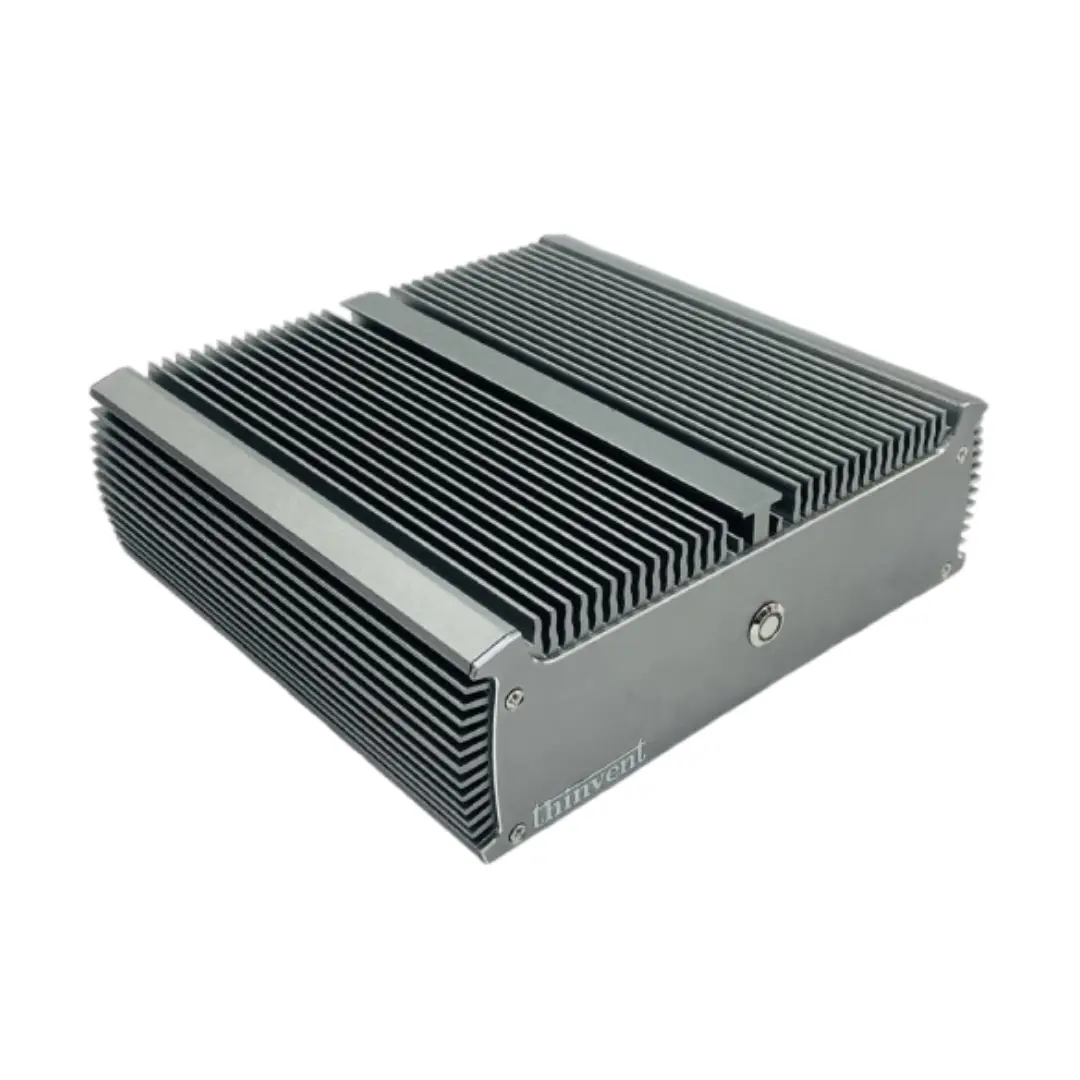

Thinvent Solutions for Accelerated Computing

While Thinvent specializes in compact, reliable industrial and embedded computing solutions, our high-performance product lines are engineered to support accelerated workloads at the edge and in constrained environments. Our Industrial PC (IPC) and Aero Mini PC series feature powerful Intel Core i5 and i7 processors from the latest generations, paired with substantial DDR4/DDR5 memory and fast SSD storage. These systems provide a robust, fanless, and durable platform capable of driving external GPU enclosures via Thunderbolt or high-speed PCIe connections, or can be integrated into larger systems requiring reliable control and data aggregation for AI inference nodes. For deployment scenarios demanding ruggedness, low power consumption, and consistent performance in industrial settings, Thinvent's solutions offer a compelling foundation for building distributed, edge-focused AI and compute applications.