A Jetson Cluster refers to a multi-node computing system built using NVIDIA's Jetson modules, designed for scalable, high-performance edge AI and machine learning workloads. These clusters combine the power of multiple Jetson devices (like the Jetson Orin, Xavier, or Nano) to create a distributed computing platform capable of handling complex parallel processing tasks such as real-time computer vision, autonomous navigation, and large-scale sensor data analysis. Unlike traditional server clusters, Jetson Clusters are optimized for low-power, fanless operation in rugged industrial environments, making them ideal for deployment in field operations, robotics, and smart city infrastructure.

Key specifications for a robust Jetson Cluster include multiple nodes with high-core-count ARM processors, substantial GPU resources (with hundreds of CUDA and Tensor cores per module), and high-bandwidth interconnects like Gigabit Ethernet or PCIe for node-to-node communication. Memory configurations typically start at 8GB LPDDR5 per module and scale up, with storage using NVMe SSDs for fast data access. The software stack is centered on NVIDIA's JetPack SDK, which includes Linux OS, CUDA, cuDNN, TensorRT, and support for containerized deployments via Docker and Kubernetes, enabling efficient cluster management and orchestration.

Primary use cases for Jetson Clusters span industries requiring intensive, localized AI processing. In robotics, they power swarm intelligence and collaborative autonomous systems. In smart manufacturing, they enable real-time quality inspection across multiple production lines. For autonomous vehicles and drones, clusters provide the computational backbone for sensor fusion and path planning. Digital signage and interactive kiosks use them for multi-camera analytics and personalized content delivery. Their fanless, rugged design also suits harsh environments like agriculture, mining, and outdoor surveillance, where reliability and silence are critical.

| Aspect | Jetson Cluster | Traditional x86 Server Cluster |

|---|---|---|

| Core Architecture | ARM-based with integrated NVIDIA GPU | x86 (Intel/AMD) with discrete GPU options |

| Power Profile | Ultra-low power, often fanless | High power, requires active cooling |

| Deployment | Edge & rugged environments | Data center environments |

| AI Acceleration | Native, integrated Tensor & CUDA cores | Requires add-in cards or high-end CPUs |

| Typical Use Case | Distributed edge AI, robotics, IoT gateways | Enterprise computing, cloud services, virtualization |

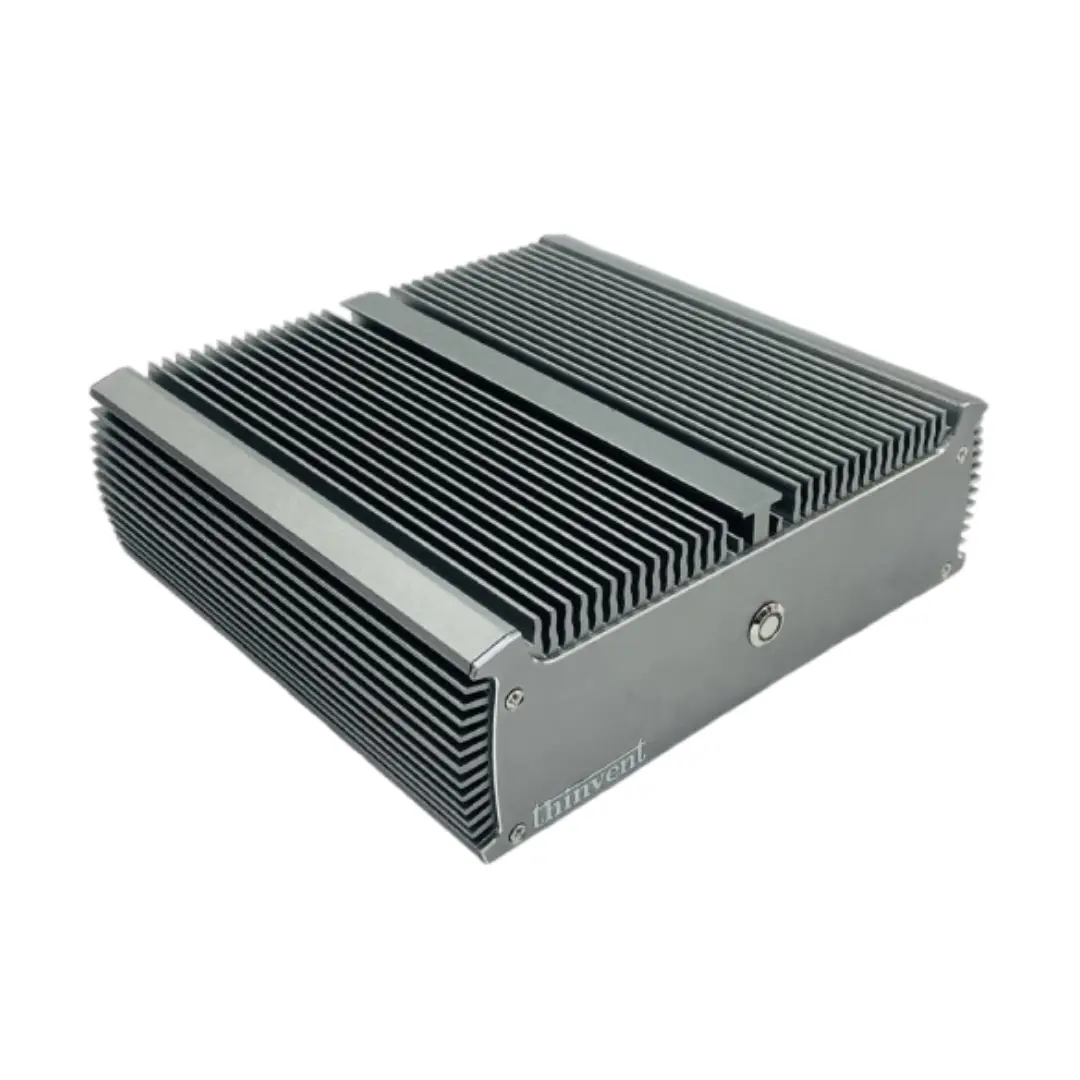

Thinvent Solutions for Edge AI Clustering

While Thinvent specializes in robust, fanless industrial computers with Intel architecture, our product philosophy aligns perfectly with the demands of edge AI clustering: reliability, compact form factors, and versatile connectivity. For applications where an x86 architecture is preferred or required for software compatibility, Thinvent's range of Mini PCs and Industrial PCs offer compelling alternatives or complementary nodes in a heterogeneous cluster. Our systems feature efficient Intel processors, ample RAM and SSD storage, multiple Ethernet ports for networking, and support for Linux distributions ideal for containerized workloads. This makes them suitable for building hybrid clusters or serving as management and data aggregation nodes alongside specialized AI accelerators, providing a complete, hardened solution for industrial edge computing deployments.