Running Large Language Models (LLMs) locally requires a specific set of hardware capabilities to handle the intensive computational and memory demands. A suitable PC for local LLM inference must prioritize a powerful multi-core CPU, substantial RAM, and fast storage to load and process multi-billion parameter models efficiently.

Key Specifications for Local LLM PCs

The primary bottleneck for local LLMs is often memory bandwidth and capacity. Key specifications to look for include:

-

High-Core Count CPU: Modern Intel Core i5/i7 processors (e.g., 12th Gen and newer) with Performance (P) and Efficiency (E) cores are ideal. They provide the parallel processing power needed for model inference.

-

Ample System Memory (RAM): A minimum of 16GB is essential for smaller 7B parameter models, while 32GB or more is recommended for 13B+ models to ensure smooth operation without constant swapping.

-

Fast SSD Storage: NVMe SSDs (512GB or larger) drastically reduce model load times compared to traditional hard drives or eMMC storage.

-

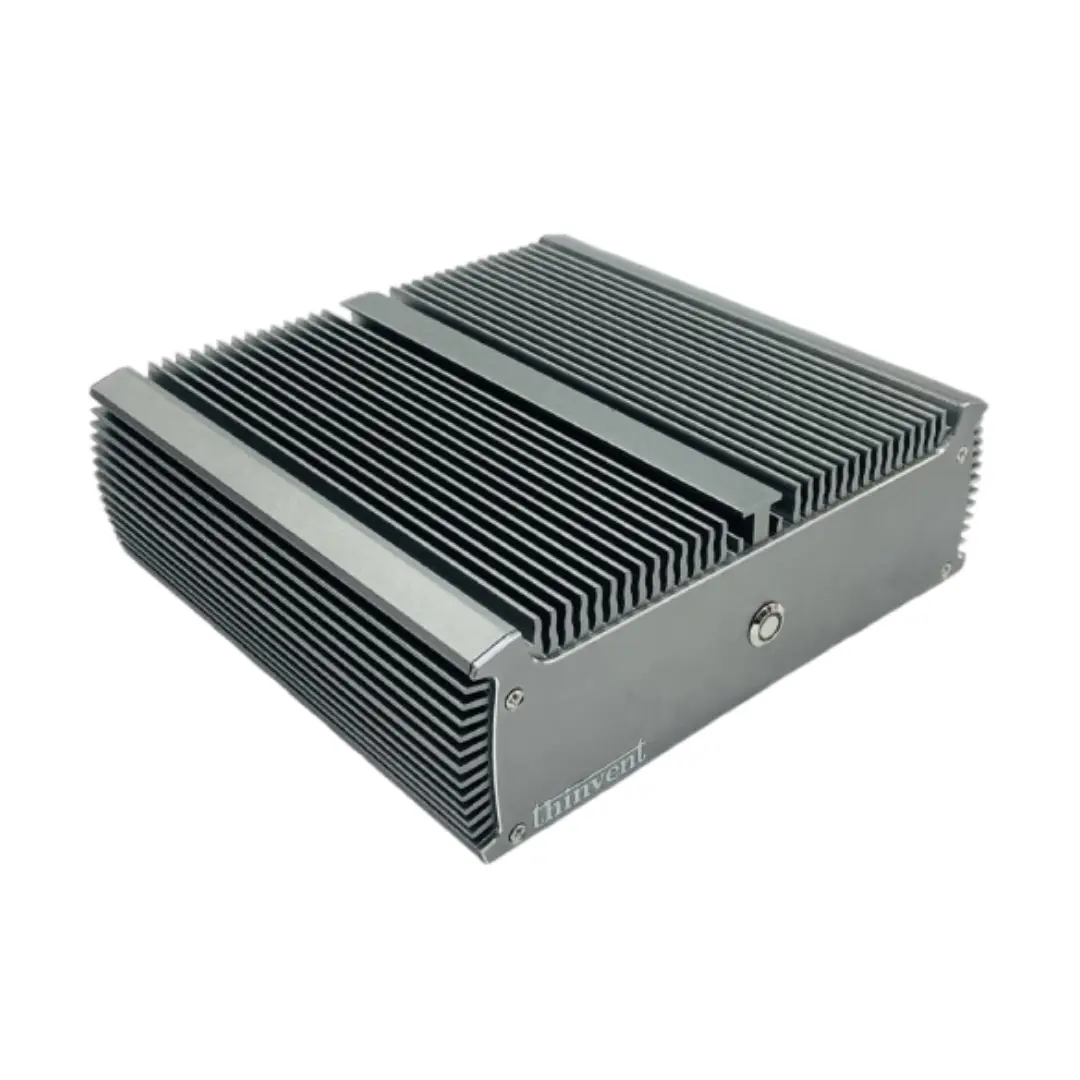

Efficient Cooling: Sustained performance requires robust thermal design. Fanless or actively cooled industrial PCs are excellent for maintaining clock speeds during long inference sessions.

Ideal Use Cases and Applications

Deploying LLMs locally on a dedicated PC is perfect for scenarios requiring data privacy, low-latency responses, or offline operation. Common applications include:

-

Private AI Assistants: For businesses handling sensitive documents, legal, or financial data.

-

Development & Prototyping: AI researchers and software developers testing and fine-tuning open-source models.

-

Content Generation Workstations: Creating marketing copy, translations, or code in secure, controlled environments.

-

Educational and Research Labs: Providing students and researchers with hands-on AI experimentation tools.

Thinvent PCs for Local LLM Workloads

Thinvent offers a range of industrial and mini PCs that meet the rigorous demands of local AI inference. Our systems are built for reliability and sustained performance in diverse environments.

For Entry-Level / Smaller Models:

- Thinvent Aero & Treo Series with Intel N100: A capable and power-efficient starting point for experimenting with quantized versions of smaller LLMs (e.g., 7B parameters).

For Mainstream Performance:

-

Thinvent Industrial PC IPC3 with Intel Core i3-1215U: A balanced option with 6 cores and support for up to 64GB RAM, suitable for robust 7B-13B model inference.

-

Thinvent Aero Series with Intel Core 5 120U: Featuring 10 cores from the latest 14th Gen, this mini PC delivers excellent performance for a wide range of models.

For High-Performance Workloads:

- Thinvent Industrial PC IPC5 with Intel Core i5-1250P: This is an ideal workstation for serious local AI. With 12 cores (4P + 8E), high turbo frequencies, and support for ample RAM and storage, it can handle larger 13B+ parameter models effectively, making it a powerful, compact AI inference server.

All Thinvent systems offer the flexibility to be configured with your choice of operating system (Windows 11 Pro or Ubuntu Linux) and can be specified with the memory and storage that best fits your AI project's requirements.