A GPU server is a specialized computing system designed to leverage the parallel processing power of Graphics Processing Units (GPUs) for tasks beyond traditional graphics rendering. Unlike standard servers with CPUs optimized for sequential processing, GPU servers are equipped with multiple high-performance GPUs to accelerate massively parallel computations. This makes them essential for modern workloads in artificial intelligence, machine learning, scientific simulation, and high-performance computing (HPC).

Key Specifications and Architecture

The core of a GPU server is its combination of powerful CPUs and multiple GPU accelerators. Key technical specifications include:

-

GPU Accelerators: Support for multiple high-end GPUs from vendors like NVIDIA (e.g., A100, H100, L40S) or AMD (e.g., MI300X), featuring thousands of CUDA or ROCm cores and large amounts of high-bandwidth memory (HBM).

-

CPU Platform: High-core-count server-grade CPUs (e.g., Intel Xeon Scalable or AMD EPYC processors) to manage data flow and orchestrate GPU tasks.

-

Memory & Storage: Substantial system RAM (often 256GB to multiple terabytes of DDR5) and fast NVMe SSD storage arrays to feed data to the GPUs without bottlenecks.

-

Interconnect & Cooling: High-speed PCIe Gen 5/6 slots for GPU connectivity, NVLink for GPU-to-GPU communication, and robust cooling systems (often liquid-cooled) to manage significant thermal output.

Primary Use Cases and Applications

GPU servers are deployed in scenarios requiring intense computational throughput:

-

AI & Machine Learning: Training and inference for large language models (LLMs), computer vision, and deep learning networks.

-

Scientific Research: Running complex simulations for computational fluid dynamics, genomic sequencing, and climate modeling.

-

Media & Entertainment: Rendering high-resolution 3D animation and visual effects (VFX) and video transcoding at scale.

-

Financial Modeling: Performing real-time risk analysis and high-frequency trading algorithms.

CPU vs. GPU Server Comparison

| Feature | Traditional CPU Server | Dedicated GPU Server |

|---|---|---|

| Core Design | Fewer, more complex cores optimized for sequential tasks. | Thousands of smaller, efficient cores for parallel processing. |

| Ideal Workload | General-purpose computing, databases, web servers, virtualization. | Parallelizable tasks: AI/ML, scientific computing, rendering. |

| Processing Speed | Excellent for single-threaded, latency-sensitive operations. | Exceptional for throughput-oriented, computationally heavy batch jobs. |

| Power & Cooling | Moderate requirements. | High power draw (often 1kW+ per GPU) and requires advanced cooling. |

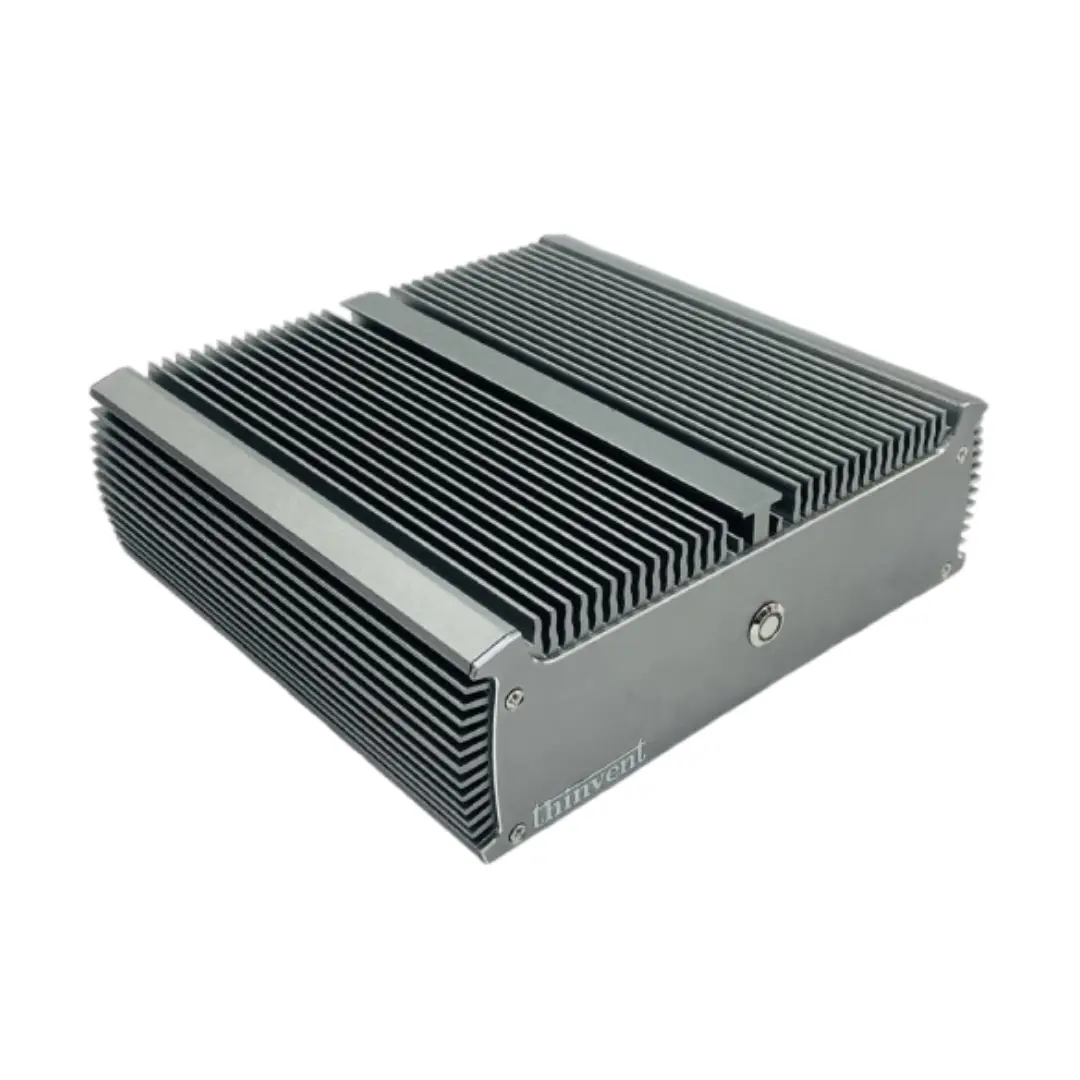

Thinvent's GPU Server Solutions

While Thinvent specializes in robust, fanless industrial computers and mini PCs for edge computing, our product philosophy of reliability and performance extends to scalable computing needs. For clients requiring GPU-accelerated performance, we provide consultation and integration services for server-grade solutions tailored to industrial AI, edge inference, and compact data center applications. Our expertise in building durable systems ensures that even high-performance computing nodes are designed for stability in demanding environments.